ITIF Technology Explainer: What Is Artificial Intelligence?

Artificial intelligence (AI) is a branch of computer science devoted to creating computer systems that perform tasks characteristic of human intelligence, such as learning and decision-making. AI overlaps with other areas of study, including robotics, natural language processing, and computer vision.

AI offers many functions including:

- Monitoring: AI can rapidly analyze large amounts of data and detect abnormalities and patterns.

- Discovering: AI can extract insights from large datasets, often referred to as data mining, and discover new solutions through simulations.

- Predicting: AI can forecast or model how trends are likely to develop, thereby enabling systems to predict, recommend, and personalize responses.

- Interpreting: AI can make sense of patterns in unstructured data such as images, video, audio, and text.

- Interacting: AI can enable humans to more easily interact with computer systems, coordinate machine-to-machine interactions, and engage directly with objects.

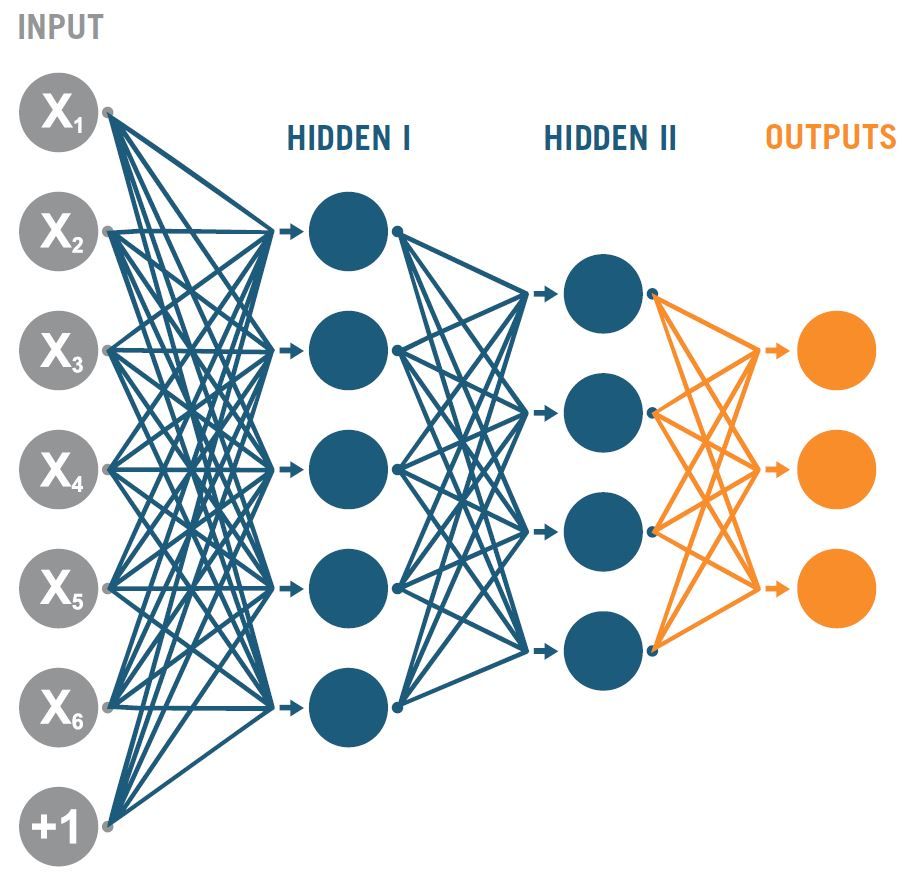

Machine learning is one of the most important subfields of AI. It focuses on building systems that can learn and improve from experience without being explicitly programmed with specific solutions. An important development in machine learning is deep learning. Deep learning involves processing multiple layers of abstractions of data and using these abstractions to identify patterns—much like the way the people learn through changes in the configuration of the neurons in their brains in response to various stimuli.

Why Now?

Computer scientists began tinkering with AI in the 1950s, but it is only in the last decade with the development of better hardware, including faster processors and more abundant storage, larger data sets, and more capable algorithms, that its functionality has improved enough to unlock many new applications.

Prospects for Advancement

AI has long been surrounded by hype. In the 1960s and 70s, some computer scientists predicted that within a decade we would see machines that could think like humans. But the field advanced slowly, leading to two distinct periods called “AI winters.” We now appear to have entered an “AI spring,” with regular announcements of breakthroughs.

Some pundits still promise (or warn) that robust progress will continue, or even accelerate, at such a fast rate that AI that will soon become capable of human-level intelligence. In contrast, some predict a third “AI winter,” in part because exponential increases in data and processing speed have not created exponential increases in AI capabilities.

ITIF expects that AI will continue to have incremental, but important, progress for the foreseeable future. As AI becomes more mainstream, the current utopian/dystopian thinking will fade away, replaced by a more measured assessment of what AI can and cannot do.

Applications and Impact

By increasing the level of automation in virtually every sector, leading to more efficient processes and higher-quality outputs, AI will boost per-capita incomes. There are a vast and diverse array of uses for AI, and many U.S. organizations are already using AI. Parts manufacturers are using AI to invent new metal alloys for 3D printing; pharmaceutical companies are using AI to discover lifesaving drugs; mining companies are using AI to predict the location of mineral deposits; credit card companies are using AI to reduce fraud; and farmers are using AI to increase automation. As the technology progresses, AI will continue bring significant benefits to individuals and societies.

AI is a “general purpose technology,” meaning, among other things, that it will affect most functions in the economy. In some cases, AI will automate work that would need to be performed by a human, thereby boosting productivity. AI can also complete tasks that it is not worth paying a human to do but still creates value, such as writing newspaper articles to summarize Little League games. In other cases, AI adds a layer of analytics that uncovers insights human workers would be incapable of providing. In many cases, it boosts both quality and efficiency. For example, researchers at Stanford have used machine learning techniques to develop software that can analyze lung tissue biopsies faster and more accurately than a top human pathologist can. AI is also delivering social benefits, such as by rapidly analyzing the deep web to crack down on human trafficking, fighting harassment online, helping development organizations better target impoverished areas, reducing the influence of gender bias in hiring decisions. Finally, AI will be an increasingly important technology for defense and national security.

AI can address many goals, such as improving logistics, detecting and responding to cybersecurity incidents, and analyzing the enormous volume of data produced on the battlefield. AI will have its biggest impacts on more routinized information-based functions (e.g., making loans, analyzing a medical test, plotting the best route) and physical functions (e.g., guiding a factory robot, helping a car drive), and it will have much less impact on human-services functions (e.g., taking care of the elderly) and on complex functions (e.g., criminal law, managing a company). Indeed, the correlation between the risk of job loss from AI (and other emerging technologies) and average education and skill level of an occupation is strong and negative (above -0.5).

Schematic for processing data inside a neural network. Logical scheme of a perceptron with three outputs, an input and intermediate layers.

Policy Implications

There are three important policy implications. First, governments should develop strategies to promote AI innovation, such as through targeted R&D funding and accelerating the use of AI in the public sector and support its use in sectors with public oversight, such as education, finance, health care, and transportation.

Second, governments should follow the “innovation principle” rather than the “precautionary principle” and address risks as they arise, or allow market forces to address them, and not hold back progress with restrictive tax and regulatory policies because of speculative fears. For example, rather than require companies to disclose their source code (“algorithmic transparency”) or be able to interpret any automated decision (“algorithmic “explainability)—proposals that would limit AI innovation—policymakers should focus on holding companies accountable for outcomes (“algorithmic accountability”).

Finally, because AI is likely to modestly increase the rate of occupational disruption, particularly among lower-skilled occupations, governments should do more to help workers make successful transitions. In part this means education reform should be focused on enabling workers to gain “21st century generic skills” and more technical skills.

Recommended Reading

- Robert D. Atkinson, “Don’t Fear AI” (European Investment Bank, June 2018).

- Joshua New and Daniel Castro, “How Policymakers Can Foster Algorithmic Accountability” (ITIF, May 2018).

- Joshua New and Daniel Castro, “The Promise of Artificial Intelligence: 70 Real-World Examples” (ITIF Center for Data Innovation, October 2016).

- Robert D. Atkinson, “How to Reform Worker-Training and Adjustment Policies for an Era of Technological Change” (ITIF, February 2018).

- Daniel Castro, “How Artificial Intelligence Will Usher in the Next Stage of E-Government,” Government Technology, December 16, 2016.

- Nick Wallace and Daniel Castro, “The Impact of the EU’s New Data Protection Regulation on AI” (ITIF Center for Data Innovation, March 2018).

Related

September 22, 2025

AI Is Much More Evolutionary Than Revolutionary

December 2, 2022