A New Frontier: Sustaining U.S. High-Performance Computing Leadership in an Exascale Era

Continued leadership in high-performance computing (HPC) as it enters the exascale era remains a key pillar of U.S. industrial competitiveness, economic power, and national security readiness. Policymakers need to sustain investments in HPC applications, infrastructure, and skills to keep America at the leading edge.

KEY TAKEAWAYS

Key Takeaways

Contents

What Is High-Performance Computing? 3

Why Does High-Performance Computing Matter? 6

Unlocking New Pathways to Scientific and Technology Discovery 6

HPC Represents the “Tip of the Spear” in Advanced Computing. 8

Maximizing the Potential of AI/ML/DL. 9

Economic Impact of Supercomputing. 10

Why Does National Leadership in HPC Matter? 12

International Supercomputing Leadership. 13

Next-Generation Commercial Applications of HPC. 16

HPC Enabling Aerospace Innovation. 16

HPC Enabling Automotive Innovation and Mobility Solutions 20

HPC Enabling Consumer Packaged Goods Innovation. 21

HPC Enabling U.S. Life Sciences Innovation. 22

Defense and Environment-Oriented Applications of HPC. 29

Introduction

High-performance computing (HPC) refers to supercomputers that, through a combination of processing capability and storage capacity, can rapidly solve difficult computational problems across a diverse range of scientific, engineering, and business fields.[1] HPC represents a strategic, game-changing technology with tremendous economic competitiveness, science leadership, and national security implications. Because HPC stands at the forefront of scientific discovery and commercial innovation, it is positioned at the frontier of competition—for nations and their enterprises alike—making U.S. strength in producing and adopting HPC instrumental to its industrial competitiveness and national security capability.

On May 30, 2022, the world entered the exascale computing era with the launch of Frontier, a supercomputer at the Department of Energy’s (DOE’s) Oak Ridge National Laboratory (ORNL) capable of executing one quintillion floating point operations per second (FLOPS). The advent of exascale computing will unlock a wealth of heretofore scarcely imaginable research opportunities across a variety of scientific, technical, and engineering fields that scientists are only beginning to even scratch the surface of exploring. However, while exascale computing certainly represents a game changer, the proliferation of a greater number of ever-more capable supercomputers is helping researchers in fields from aerospace, astronomy, biology, particle physics, seismology, and weather to many others achieve breakthroughs in the modeling and simulation (M&S) of complex biological, chemical, and physical systems, deepening scientific understanding and unleashing new innovations. For American industry, leadership in HPC application is integral to research and product development, time to market, cost avoidance, and achieving energy efficiency in manufacturing processes, making facility with HPC a key mechanism for achieving comparative advantage and going to market with differentiated and unique value propositions. In short, HPC represents a key capability from both an economic and national security perspective, and policymakers must continue to sustain investments and build ecosystems to ensure America leads the world in this critical technology.

This report begins by explaining what HPC is and examining why HPC itself and national leadership therein matters. It then assesses the state of global HPC leadership before turning to explore a range of cutting-edge HPC applications across industrial, national security, and mission-oriented domains. It concludes by providing policy recommendations to ensure America remains the world’s leader in developing HPC systems and applications.

What Is High-Performance Computing?

HPC refers to the application of supercomputers—the world’s fastest, largest, most-powerful computer systems—alongside sophisticated models and large datasets to study and solve complex scientific, engineering, and technological challenges, especially those requiring the understanding, modeling, and simulation of complex, multivariate physical systems.[2] HPC unites several technologies, including computer architecture, programs and electronics, algorithms, and application software, under a single platform to solve advanced, sometimes heretofore intractable problems quickly and effectively.

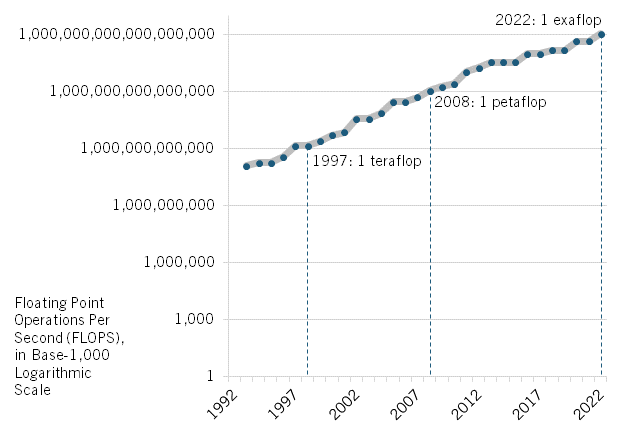

The world’s leading supercomputers are measured by the number of FLOPS they can calculate, and their capacity has increased enormously over the past 30 years. In 1993, the world’s fastest computer was capable of executing 124 billion, or more than 109 FLOPS, also known as “gigaflops.” The speed of the world’s fastest supercomputer steadily increased in the ensuing decades, crossing the teraflop, or 1012 FLOPS, threshold in 1997 and the petaflop, or 1015 FLOPS, barrier in 2008. (See figure 1.) Fourteen years later, with the launch of the Frontier supercomputer at ORNL on May 30, 2022, the United States became the first nation to publicly field an exascale supercomputer (China may have had two supercomputers cross this threshold in 2021, though these weren’t formally submitted to the top 500 list), one capable of executing 1018, or one quintillion (that is one million trillion), FLOPS per second (i.e., an “exaflop”).[3] (See figure 2.)

Figure 1: Speeds of world’s fastest supercomputers, 1993–2022[4]

Frontier, with 8.7 million cores and a rated speed of 1.102 exaflops, surpassed Japan’s then-world-leading supercomputer Supercomputer Fukagu (Japanese for Mount Fuji) which, with 7.6 million cores, was rated at 537 petaflops.[5] (A core generally refers to a single independent execution unit that can fetch instructions and execute them one by one simultaneously.) Frontier occupies a space of more than 4,000 square feet and includes 90 miles of cable and 74 cabinets, each weighing 8,000 pounds.[6] The United States plans to bring a second exascale-capable computer, Aurora, online at the Argonne National Laboratory later in 2022, with performance levels that may exceed two exaflops.[7] In 2023, the United States will bring online a third exascale-capable computer, El Capitan, expected to operate at 1.5 exaflops, at the Lawrence Livermore National Laboratory (LLNL), with a foremost mission of helping manage the nation’s nuclear arsenal.[8]

Figure 2: Frontier supercomputer, Oak Ridge National Laboratory[9]

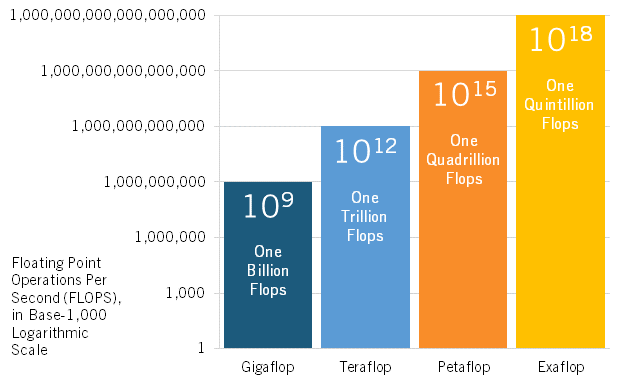

It’s critical to understand that each step change in computer processing speeds—from gigaflops to teraflops to petaflops to exaflops—represents a 1,000-fold increase in peak computing speeds: that is, an increase in three “orders of magnitude” (an “order of magnitude” generally being understood as an increase in something by a factor of 10). Thus, an exascale supercomputer is 1,000 times faster and more powerful than a petascale computer. (See figure 3.) All told, the performance of the world’s fastest supercomputer has increased almost 70,000-fold over the past 20 years.[10]

Figure 3: Conceptualizing the growth in supercomputer processing speeds[11]

Why Does High-Performance Computing Matter?

Conceptually, HPC matters greatly to countries’ economic and national security for several key reasons, including that it 1) unlocks new avenues and pathways toward scientific and technological discovery; 2) represents “the spear” of more capable computing architectures and technologies that ultimately make one’s laptop, tablet, or smartphone more capable; 3) will be a vital enabler of unlocking the enormous promise of big data and artificial intelligence/machine learning/deep learning (AI/ML/DL) to facilitate discovery and innovation; and 4) produces significant economic benefits, both directly and indirectly. And while some of the following section is framed with regard to potential new opportunities realizable now that exascale-era computing has been achieved, it’s imperative to note that there as yet remains only one exascale supercomputer, so all the following discussed benefits apply broadly to HPC, whether supercomputers operate in the petaflop or exaflop range.

The performance of the world’s fastest supercomputer has increased almost 70,000-fold over the past 20 years.

Unlocking New Pathways to Scientific and Technology Discovery

As noted, an exascale-capable computer will be 1,000 times faster and more powerful than only a petascale-capable computer. That matters, for as the Dutch computer scientist Edsger Dijkstra explained, “A quantitative difference is also a qualitative difference, if the quantitative difference is greater than an order of magnitude.”[12] Thus, one key reason why the achievement of exascale-capable supercomputers matters is because for every order of magnitude increase in computing capability, one enjoys a qualitative increase in what one can achieve with that computing power. In other words, the types of applications one can run on exascale platforms are fundamentally different from the types of applications one can run on petascale platforms.[13] At one quintillion operations per second, exascale computers will be able to “more realistically simulate the processes involved in scientific discovery and national security such as precision medicine, microclimates, additive manufacturing, the crystalline structure of atoms, the functions of human cells and organs, and even the fundamental forces of the universe.”[14]

As Rick Arthur, senior director for advanced computational methods research at GE Research, framed it:

A researcher can only model a universe that will fit within the size of the largest computer they can access. Therefore, top “leadership class” computers set the threshold for what phenomena can and cannot be perceived, studied, and understood. That is, if the data or model of the subject of interest surpass what can stored or feasibly processed on your computer, insight is beyond your reach. Exascale computer systems greatly expand the universe of what can be modeled by scientists and engineers, so they can achieve never-before realism in physics-based and data-derived models; with much greater completeness, accuracy, and fidelity in scale, and scope, and the ability to more confidently assess sensitivities from the inputs and confidence boundaries on the outputs.[15]

In other words, exascale-era computing will open new doorways to solving complex, multivariate scientific, technological, and engineering challenges, especially those requiring data-intensive, M&S-driven solutions, allowing such phenomenon to be understood at levels of resolution, granularity, and detail never before possible. Supercomputers (in general, and exascale in particular) will enable the construction of higher-fidelity multiphysics models that can mathematically describe the real-time interplay of diverse physical phenomena and variables within a system, facilitating the simulation of complex interactions and the potential to isolate the relative effects of each variable affecting the system.[16] While it’s not an exact analogy, computing at 1018 speeds will enable scientists to investigate complex physical systems at an ever-greater 10x resolution, dimension, or time period (in each case, scaled either up or down), and at much faster speeds, whether it comes to modeling the behavior of nine billion individual atoms the instant of an atomic explosion, the action of each cell in a beating human heart, the movement of electricity through a smart grid, or the crystalline structure of atoms or molecules.

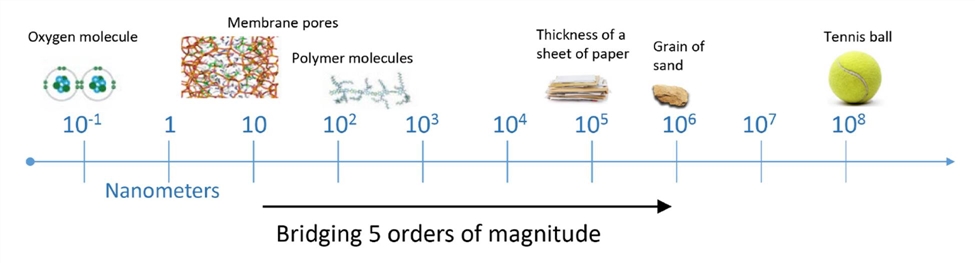

As Arthur explained it, “Computational tools like HPC fundamentally represent a scientific instrument” just like a microscope (which allows one to interrogate systems in extreme detail) or a macroscope (like a telescope, which allows researchers to perceive system-wide interactions and explore a vast dimensionality.)[17] Scientists investigate physical phenomena and systems across a wide range of physical scales (i.e., different sizes or lengths), temporal scales, and spatial scales (e.g., the extent of an era or region over which a phenomena occurs). This matters tremendously because the behavior of physical phenomena can have different dynamics at very different scales (e.g., the nanoscale) as compared with large scales (e.g., the macroscale). Supercomputers can model behavior in a system at tens of thousands to hundreds of thousands times greater resolution (i.e., level of detail) than other computers. For instance, understanding nanoscale physics—such as the behavior of fluids moving across membrane pores—involves modeling interactions that occur at 100 nanometers (nm) or less in length. Conversely, to study the impacts of such interactions at the macroscale (visible to the naked eye) requires scaling the interactions by four orders of magnitude, or 10,000 times, to 1,000,000 nm, or about the size of a grain of sand.[18] (See figure 4.)

Figure 4: Conceptualizing scale lengths by orders of magnitude[19]

A particular reason why this matters for U.S industrial competitiveness, as a report from DOE’s Office of Energy Efficiency and Renewable Energy (EERE) explains, is because, in manufacturing, “The more easily achievable progress has been made; the so-called ‘low hanging fruit’ have been picked.”[20] In other words, opportunities for game-changing or competitive advantage-creating industrial innovation will increasingly require companies to reach the “high-hanging fruit,” which HPC is uniquely positioned to facilitate. As the report elaborates:

Problems and opportunities for improved productivity and performance remain, yet next-generation innovations tend to be more complex and carry higher risk. More sophisticated research approaches are needed to identify opportunities. Detailed analysis with HPC can discern cost-effective ways to improve productivity, increase sustainability, and save energy.[21]

Another way supercomputers can unlock innovation is by providing a new dimension to the scientific method. Heretofore, the fundamental steps in the scientific method were 1) research, 2) form a hypothesis, 3) conduct an experiment, and 4) analyze the data and draw a conclusion. But HPC enables the introduction of an entirely new step through its simulation and prediction capabilities. That is, the model of “theory/experiment/analysis” in the sciences or “theory/build a physical prototype/experiment/analyze” in product development is changing to one of “theory/predictive simulation/experiment/analyze.”[22] Thus, HPC-enabled computer simulation becomes a “third pillar” of scientific discovery, complementing traditional theory and experimentation.[23]

Supercomputers open new doorways to solving complex, multivariate scientific, technological, and engineering challenges, especially those requiring data-intensive, M&S-driven solutions, allowing such phenomenon to be understood at levels of resolution, granularity, and detail not before possible.

As DOE EERE explained, “Historically, advancements in manufacturing have relied on repetitive-trial-and-error development and experimentation.”[24] But these research methods have inherent limitations, including that they’re too often expensive, slow, risky, infeasible, or incapable of understanding what’s occurring within complex systems. In contrast, “Computational experiments on a supercomputer can explore complex systems that are difficult to simulate using physical experiments and typical computers.”[25] While the DOE quote above refers to HPC’s use in the manufacturing context, it’s important to note that the principle applies to any domain of scientific inquiry—whether developing the optimal design of a nuclear reactor or a chemical catalyst, or modeling the movement of weather systems or galaxies. Put differently, HPC allows designers to produce designs for products from airplanes to wind turbines to nuclear reactors on computers and facilitate the fabrication of initial prototypes that can be fielded with a more “confirmatory” than “exploratory” expectation regarding their features and performance attributes. This will help accelerate speed to market for a wide range of products designed with the benefit of supercomputers. Lastly, it should be noted that the faster speeds of exascale-era computers—that is, the capability to perform in hours computations that previously took days, or days for calculations that used to take weeks—will not only save time for a given application but also free up HPC resources for a wide variety of additional uses. (Incidentally, information scientists refer to the ability to compute at faster speeds as “strong scaling” and the ability to calculate at finer scales or resolutions as “weak scaling”; HPC enables both.)

HPC Represents the “Tip of the Spear” in Advanced Computing

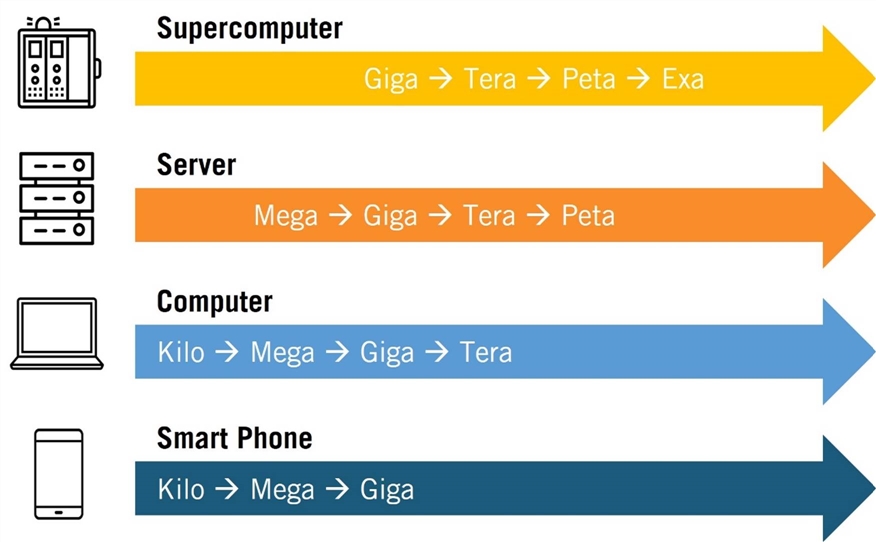

The demand for ever-faster application-specific logic and memory semiconductors, including graphic processing units (GPUs), accelerators, interconnects, and sophisticated software code positions HPC at the frontier of advanced computing. And just as supercomputers have moved from giga to tera to peta to exascale capabilities, so too have downstream information technology (IT) devices—from servers to personal computers to smartphones—all of which have become ever faster and more capable. (See figure 5.) This is of course a manifestation of Moore’s Law—the notion that the number of transistors on a microchip doubles about every two years—effectively meaning a semiconductor’s capability in terms of speed and processing is doubled even as its cost is halved (also called “process-node scaling”). But the point is that the pursuit of exascale computing has driven innovations in semiconductor and computer architecture design that ultimately propagates across downstream IT platforms all the way to the individual consumer.

Figure 5: The pursuit of exascale helps drives IT innovation[26]

Maximizing the Potential of AI/ML/DL

McKinsey analysts estimated that AI—a field of computer science devoted to creating computing systems that perform operations analogous to human learning and decision-making—may deliver additional global economic output reaching $13 trillion by 2030, increasing global gross domestic product (GDP) by about 1.2 percent annually.[27] AI is positioned to deliver such tremendous impact in part because it will help enterprises extract and apply actionable insight and intelligence from data in real time. Two subfields of AI will be particularly important in this regard: ML, a branch of AI focusing on designing algorithms that can automatically and iteratively build analytical models from new data without explicitly programming a solution, and DL, a subfield of ML that structures algorithms in layers to create “neural networks” that can learn.[28]

But if the spreadsheet was the so-called “killer app” for the personal computer, so too may be the marriage of big datasets and AI/ML/DL with supercomputers, with the latter substantially enabling the former. As John Sarraro, deputy director for science, technology, and engineering at the Los Alamos National Laboratory (LANL) explained, “The next generation of exascale computing will enable AI solutions we can barely imagine today.”[29] Indeed, the advent of AI/ML/DL has “created new demands for HPC with its own application-specific technical requirements that include mixed precision and integer math” and an increasing amount of HPC “loads” (i.e., usage) is for AI/ML/Dl applications.[30] In particular, supercomputers are proving transformative in rapidly training algorithms on large datasets—the essence of ML—thus enabling the development of AI tools that can be served across a variety of platforms, from mobile phones to fitness monitors. Siri voice recognition software provides a nice example of an end-user AI-based service, now provided via a cloud service to one’s smartphone, but which was originally developed with the help of supercomputers.

Moreover, not only can HPCs handle large datasets, but they can do so with regard to a wide variety of structured data (i.e., on a spreadsheet) and unstructured data, such as images, video, audio, text, telemetry, temperature, air pressure, etc., much of it arriving from a variety of sensors, machines, satellites, etc. As one report explains, “High performance data analytics requires the special storage and interconnect capabilities of HPCs to effectively process large and diverse data sets that may include voice, text, image, and instrumentation outputs to generate new insight appropriate to a wide range of sectors including medicine, finance, transportation, and manufacturing.”[31] Going forward, supercomputers will be well positioned to consume a diverse variety of data from a wide range of sources and synthesize the information in real time to generate actionable intelligence and insights delivered to users in the field or “on the edge”; in other words, supercomputers will actually be complementary to unlocking the potential of edge computing.[32] Lastly, it should be noted that the AI-HPC relationship also runs in the other direction. That is, complex models and simulations running on HPC machines generates enormous amounts of data that’s often difficult to sort through to find the meaningful “needle in the haystack”; researchers often run smart AI/ML algorithms against those massive new datasets generated by HPC to help unearth novel insights.

If the spreadsheet was the so-called “killer app” for the personal computer, so too may be the marriage of big datasets and AI/ML/DL with supercomputers.

Economic Impact of Supercomputing

Lastly, HPC matters because it produces manifold economic impacts, ranging from the economic value supercomputing creates for the users of HPC in the products they develop to the economic impact generated by the HPC industry’s sales of its products.

Economic Impact Generated Through the Use of Supercomputers

As a report by Earl Joseph et al. at Hyperion Research explains, “While it is difficult to fully measure the value that supercomputers have generated, even looking at just automotives, aircraft, and pharmaceuticals supercomputers have contributed to products valued at more than $100 trillion over the last 25 years.”[33] Hyperion has estimated that the economic value created by the application of Linux system-based supercomputers (which account for virtually all of the world’s top 500 supercomputers) has exceeded $3 trillion over the past 25 years.[34]

In a study of 175 industrial firms, Hyperion found that, on average, the companies realized $452 for every $1 they invested in HPC. (See table 1.) Narrowing the study to enterprises in the finance, life-sciences, manufacturing, and transportation industries, firms realized $504 in “sales revenue” and $38 in “profits or cost savings” for every $1 invested in HPC.[35] Hyperion estimated that those 175 HPC-supported projects created 2,335 new jobs in the companies studied. Such significant impacts from HPC shouldn’t be surprising, as “some large industrial firms have cited savings of $50 billion or more from HPC usage.”[36]

Table 1: Financial return on investment from HPC, by select industry[37]

|

Industry |

Average Revenue per HPC Dollar Invested |

Average Profit or Cost Savings per HPC Dollar Invested |

|

Defense |

$75.00 |

$18.80 |

|

Financial |

$641.70 |

$47.40 |

|

Insurance |

$175.70 |

$280.00 |

|

Life Sciences |

$205.60 |

$40.90 |

|

Manufacturing |

$216.50 |

$28.40 |

|

Oil and Gas |

$416.00 |

$53.70 |

|

Telecommunications |

$210.70 |

$30.40 |

|

Transportation |

$1,804.30 |

$15.60 |

|

TOTAL |

$452.10 |

$37.60 |

A 2018 report examines the return on investment (ROI) of three research cyberinfrastructure networks: Indiana University's Big Red II supercomputer, the National Science Foundation (NSF)-funded Jetstream cloud system, and the federally funded eXtreme Science and Engineering Discover Environment (XSEDE).[38] Based on the cost of the cyberinfrastructure, the computing core hours the network provided, and the comparable price of those core hours using Amazon Web Services instead, the authors estimated the ROI of Big Red II from 2013 to 2017 to be 2.5–3.7; the one-year ROI of Jetstream to be 1.9–3.1; and the ROI of XSEDE in its most recent project year to be 1.34 (up from 1.17 in the prior project year). In other words, supercomputers generated the most significant economic returns of the assets evaluated in the study.

A 2017 report estimates the regional economic impacts of the National Center for Supercomputing Application’s (NCSA’s) Blue Waters supercomputer at the University of Illinois Urbana-Champaign, finding Blue Waters-related projects added $1.08 billion to the state's economy.[39] The report also estimates that over the project's life (October 2007 to June 2019), it will have created 5,772 full-time-equivalent jobs and supported 1,892 direct and indirect jobs between April 2013 and June 2016. The estimated output multiplier from project expenditures was 1.86 and the estimated employment multiplier was 2.04.[40]

Economic Impact Generated By the HPC Industry

In terms of the industry itself, Hyperion Research has estimated that over $300 billion has been generated from the sales of Linux-based supercomputers. Going forward, Hyperion estimates global sales of Linux-based supercomputers from 2022 to 2026 will generate $90 billion in machine sales and $90 billion in supporting infrastructure.[41] Hyperion estimated the global HPC market at $34.8 billion in 2021 and projects that sales of on-premises HPC servers will increase by 7.9 percent over the next five years, while cloud-based HPC usage will grow by 17.6 percent over that timeframe.[42]

Why Does National Leadership in HPC Matter?

Broadly, the United States remains the leader in both developing HPC systems and deploying them, although that lead has shrunk. Some might ask why it matters that the United States should lead in HPC. Likewise, others might argue that so long as HPC users in the United States—whether enterprises, academic researchers, or government agencies—can get access to the HPC systems they need, it does not matter which enterprises in the world manufacture those machines, so policymakers should be agnostic on the issue. However, such contentions are misguided for a number of reasons.

First, supercomputers represent a vital enabler of U.S. defense capabilities, and especially its nuclear defense posture (as a subsequent section of this report elaborates). In fact, one could substitute nuclear weapons themselves for high-performance computers and ask whether it would be troubling if the United States depended on China or the European Union for its nuclear weapons systems. And if the United States’ relying on other nations to supply its nuclear arsenal sounds like an untenable proposition, then so is the notion of it relying on other nations for the most-sophisticated HPC systems. From a national security perspective, the United States needs assurance of access to the best high-performance computers in the world simply because it gives U.S. defense planners a competitive edge and allows the U.S. defense industrial system to design leading-edge weapons systems and national defense applications faster than anyone else.

Second, the notion that U.S. enterprises would certainly enjoy ready access to the most sophisticated HPC systems for commercial purposes should they be predominantly produced by foreign vendors constitutes an uncertain assumption. If Chinese vendors, for example, dominated globally in the production of next-generation HPC systems, it’s conceivable that the Chinese government could exert pressure on its enterprises to supply those systems first to their own country’s aerospace, automotive, or life-sciences enterprises and industries in order to assist them in gaining competitive advantage in global markets. The notion that U.S. enterprises can rely risk-free on access to the world’s leading HPC systems if they are no longer being developed in the United States amounts to a tenuous expectation that could place broad swaths of downstream HPC-consuming industries in the United States at risk. America’s dependence on Chinese suppliers for personal protective equipment in the opening stages of the COVID-19 pandemic provides a salient warning of the risks of depending on foreign (especially Chinese) suppliers in exigent situations.

Third, and perhaps the most compelling reason why U.S. leadership in HPC matters, is HPC systems are not developed in a vacuum: HPC vendors don’t go off into a room and draw up designs and prototypes for new HPC systems by themselves hoping someone will purchase them later. Rather, HPC vendors often have strong relationships with their customers, who co-design next-generation HPC systems in partnership with them. So-called “lighthouse [or ‘lead’] users”—which, in fact, are government agencies such as DOE or Department of Defense equally as often as leading-edge corporate users—define the types of complex problems they want to leverage HPC systems to solve, and then the architecture of the system (e.g., how the cores will be designed to handle the threads calculating the solutions) is co-created. This ecosystem exists between the HPC vendors and some of the more advanced users in both the commercial and government sectors, and this symbiotic relationship pushes the frontier of HPC systems forward. So when a country has a leadership position in HPC, this enables close collaboration with the end users who buy the machines, and that creates a supply and demand dynamic for systems that are best for U.S. domestic competitiveness.

International Supercomputing Leadership

Given the critical importance of supercomputing to countries’ economic and national security, it’s no surprise that many nations and regions are competing fiercely for supercomputing leadership, whether with regard to producing the world’s fastest supercomputers, the most supercomputers, the most aggregate supercomputing capacity, or the most effective ways to leverage this computational power. Indeed, “supercomputers have long been a flash point in international competition.”[43] As one report pithily observes, “To out-compute is to out-compete.”[44] However, while each of those factors matters, so too does the accessibility, usability, and usefulness of those supercomputing assets. To offer a Cold War analogy, the USSR may have usually fielded the fastest fighter jets, but if they were often in the hangar due to design flaws or missing spare parts, they were of limited value. In other words, it’s not always about the shiniest, fastest, or greatest number of objects, but how functional and useful they truly are.

While the performance capabilities of a nation’s supercomputers certainly matters, what matters even more is the ability of researchers to effectively leverage HPC resources to meaningfully solve real-world scientific, technical, and engineering problems.

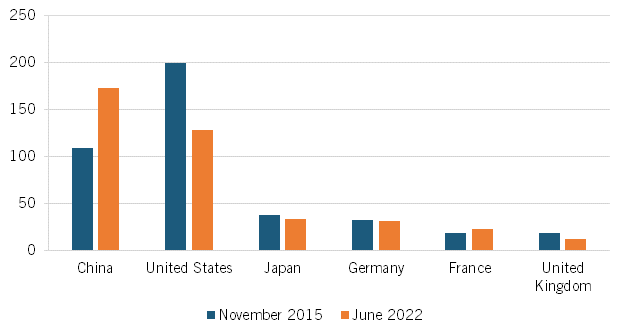

As of November 2015, the United States fielded 199 of the world’s top 500 supercomputers, compared with China’s 109; however, by June 2022, China had flipped the script, fielding 173 of the 500 fastest supercomputers to the United States’ 128. (See figure 6.) This represented a 58 percent increase in the number of Chinese supercomputers in the top 500, while the U.S. share slipped just over one-third. (Second- and third-placed Japan’s and Germany’s number of top 500 supercomputers remained steady, Japan falling from 37 to 33 supercomputers in the top 500 and Germany increasing from 31 to 32 over that period, meaning the big shift came in the relative positions between China and the United States.) It should also be noted that China appears to have stopped submitting its newest supercomputers to the top 500 list (with as many as 7 of the top 10 fastest supercomputers now actually being Chinese), potentially in part because China fears the United States might impose further export control restrictions of U.S. chips and chip technologies to China. In fact, two Chinese supercomputers are reported to have each reached exaflop status in 2021 in terms of both theoretical and realized performance—although these supercomputers haven’t been submitted to the top 500 list.[45]

Figure 6: Number of supercomputers in the top 500, by select country[46]

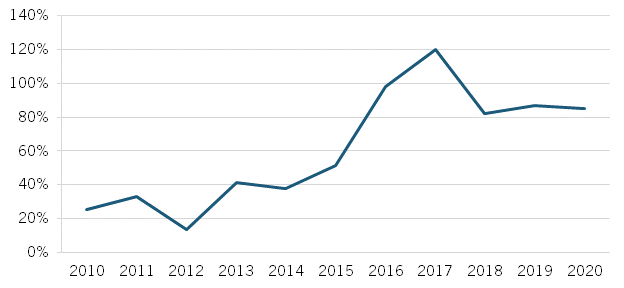

But, again, a count of which country has the most supercomputers is somewhat simplistic. When considering Rmax, which measures the cumulative supercomputing power of nations, China’s stands at about 80 percent of the U.S. level. (See figure 7.) China had surpassed the United States on this measure in 2017, but the United States’ introduction of several new supercomputers in the late 2010s, even before now reaching exascale with Frontier, shifted this indicator back in the United States’ favor.

Figure 7: China’s cumulative supercomputing capacity as a share of U.S. total, 2010–2020[47]

Moreover, China still struggles with maximizing the potential impact of its supercomputers. While the country has shown it can build massively parallel, fast supercomputers, it lags behind at developing innovative software applications that can leverage these supercomputers to generate new insights and discoveries across a wide range of fields. As HPCWire’s Tiffany Trader put it, “China’s challenge has been a dearth of application software experience.”[48] For example, China’s Tianhe-2 supercomputer “is reportedly difficult to use due to anemic software and high operating costs [including] electricity consumption that runs up to $100,000 per day.”[49] In short, China’s HPC approach thus far appears to have emphasized performance speeds over practical applications, meaning the functionality of its machines lag behind those in Europe and the United States.[50]

In contrast, the United States has concentrated heavily on and indeed has invested billions in developing software and applications that can run effectively on the nation’s supercomputing infrastructure, in addition to investing in training researchers who can take advantage of these tools. For instance, the Extreme Scale Scientific Software Stack (E4S) seeks to demystify complicated HPC hardware and software, lowering the barrier to entry for others to us HPC. It represents a community effort to provide open source software packages for developing, deploying, and running scientific applications on HPC platforms, providing from-source builds and containers for a broad collection of HPC software packages.[51] Similarly, the NCSA at the University of Illinois Urbana-Champaign represents a center of advanced cyberinfrastructure and expertise that provides a hub for transdisciplinary research that unites academic institutions and global companies in search of the answers to the world’s most challenging problems.[52] In other words, its focus is on democratizing HPC access for U.S. academic researchers and businesses large and small alike. According to Bob Sorensen, senior vice president of research for Hyperion Research, “Where the United States has clearly excelled [compared with other nations] in HPC is concentrating on access to and the usability of its national HPC infrastructure so that HPC can be effectively deployed to solve academic and industrial research challenges.”[53]

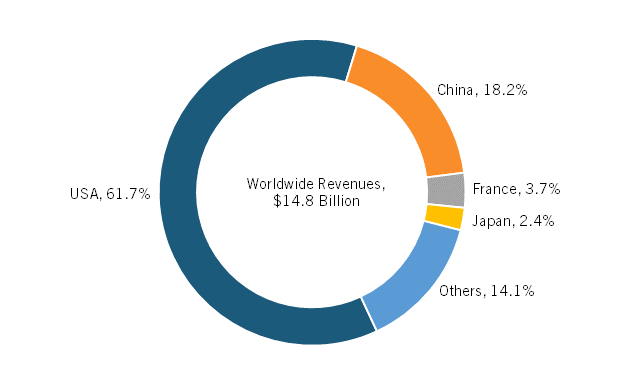

Figure 8: On-premises revenues of HPC server vendors, by country of headquarters, 2021[54]

In terms of which nations’ enterprises lead in selling on-premises HPC server systems, the United States clearly leads. In 2021, U.S.-headquartered enterprises commanded 61.6 percent of the on-premises HPC market, followed by Chinese companies with an 18.3 percent share, and French and Japanese ones with 3.7 and 2.4 percent, respectively.[55] (See figure 8.) In 2021, North America accounted for 42 percent of global HPC server consumption, Europe 28 percent, Asia outside Japan (though largely China) 22 percent, and Japan 6 percent.[56]

Next-Generation Commercial Applications of HPC

Exascale-era HPC is unlocking breakthrough innovation across numerous U.S. industries, from aerospace and automotive to consumer packaged goods (CPG), energy, and life-sciences sectors (among many others). For U.S. industry, HPC applications accelerate R&D activities, make entirely new product designs or structures possible, speed time to market, decrease costs, enhance energy efficiency, and transform go-to-market business models. This section highlights some of the latest applications of HPC driving U.S. industrial competitiveness forward.

HPC Enabling Aerospace Innovation

HPC is dramatically transforming both aircraft and jet engine design and innovation.

Aircraft Design and Manufacturing

HPC has fundamentally transformed how companies such as Airbus and Boeing design and manufacture aircraft, with HPC being applied to determine the aerodynamic performance of entire airplanes, including virtually every surface on an aircraft; the optimum structural design of every aircraft component, from bulwarks to wheels, in order to minimize weight; and, in the military domain, even the radar cross section of stealthy platforms. For Boeing, HPC enables faster solutions to more complex problems, more accurate results with improved performance, enhanced safety and environmental acceptability of products, quicker development timelines and thus swifter time to market, and lower overall development costs.[57]

As Jim Glidewell, a senior HPC analyst at Boeing, explained, Boeing deploys HPC for two principle reasons: achieving cost savings and generating positive ROI.[58] With regard to the first, high-fidelity simulation can allow significant reduction in the number of wind tunnel tests required in aircraft development, which matters when each such test can cost $10 million or more. For instance, Boeing physically tested 77 prototype wing designs for its 767 aircraft (which was designed in the 1980s), but for its 787 Dreamliner, only 11 wing designs were physically tested in a high-speed environment (a sevenfold reduction in the needed amount of physical prototyping), primarily because over 800,000 hours of supercomputer simulations had drastically reduced the need for physical prototyping.[59] For the next generation of commercial airplanes, completing a great deal of simulation work virtually could mean as few as three to four wind tunnel tests will be needed. On the ROI side of the equation, even very small improvements in fuel economy can result in tremendous operational efficiencies. For instance, reducing a plane’s drag by even a single-digit percentage can result in fuel savings of millions of dollars over a plane’s service life, with one airline estimating that reducing one pound of an aircraft’s weight can save 53,000 liters of fuel annually, adding up to tens of thousands of dollars in savings, in addition to delivering significant environmental benefits.[60] HPC is also deployed so that more configuration deviations can be assessed earlier in the design process, improving overall safety.

Perhaps the most intricate component of an aircraft is its wing—which is fundamentally what gives an aircraft lift and thus enables flight—and supercomputing has exerted a tremendous impact on wing design. Consider the Boeing 787 Dreamliner, the first large commercial transport aircraft with a fully composite wing—that is, one built from carbon fiber as opposed to aluminum. Joris Poort (then a Boeing engineer, now CEO of enterprise software firm Rescale) has explained that with aluminum, “there is a simple choice for the thickness of a panel.… with carbon fibre every single layer will have over 50 different angles layered on top of each other, which will be cooked in an oven or autoclave.… the number of variables [we had to consider] went from thousands to tens of millions.”[61] To design the lightest, lift-maximizing wing possible for the 787 (with the appropriate structural requirements), Boeing evaluated over 50 million variables using supercomputers. As Poort noted, HPC helped Boeing save “over 115 pounds on the wing design [on the Dreamliner, which was worth] about $180m [at the time].”[62]

Mastering the intricacies of computational fluid dynamics (CFD), a branch of fluid mechanics that uses numerical analysis and data structures to analyze and solve problems that involve fluid flows, represents one of the most significant challenges to realizing the next generation of aircraft design. As Joerg Gablonsky, a Boeing technical fellow and chair of the HPC Enterprise Council, explained, “Exascale technology is critical for Boeing to design our current and future products; and the next generation of HPC will expand the areas of the flight envelope we can effectively and accurately simulate through CFD analysis.”[63]

HPC will enable more accurate representation of aircraft performance across the entire flight envelope, improve and speed up product design, and accelerate time to market while reducing costs.

To be sure, CFD has long been integral to aircraft design, impacting wing, wing tip, and vertical tail design; fuselage and cabin design; and engine inlet and exhaust system design, among other aircraft attributes. But as a NASA report, “CFD Vision 2030 Study: A Path to Revolutionary Computational Aerosciences,” explains, “In spite of considerable successes, reliable use of CFD has remained confined to a small but important region of the operating design space due to the inability of current methods to reliably predict turbulent-separated flows.”[64] What this essentially means is that CFD has been calibrated only in relatively small regions of a commercial aircraft’s operating envelope where the external air flow is well modeled by current methods (e.g., in cruise conditions with stable flight at altitude), and there is opportunity to expand CFD to the edges of the flight envelope, such as in flaps-down situations where there exists unsteady, turbulent air flow. As the NASA report explains, one grand challenge is therefore leveraging HPC for CFD “to simulate the flow about a complete aircraft geometry at the critical corners of the flight envelope including low-speed approach and takeoff conditions, transonic buffet, and possibly undergoing dynamic maneuvers, where aerodynamic performance is highly dependent on the prediction of turbulent flow phenomena.”[65] Further, going forward, as exascale-era HPC-powered M&S becomes more capable, CFD analysis will expand to additional applications including reducing noise (both in cabin and externally from the aircraft), control failure analysis, and designing wing and edge controls.[66] Moreover, as Gablonsky noted, CFD simulations that once took a month can now be performed in days or even hours on exascale-capable machines. In summary, HPC helps enable a more-accurate representation of aircraft performance across the entire flight envelope, improve and speed up product design, and accelerate time to market while reducing costs.

One other notable area in which supercomputing is impacting aircraft design is noise abatement. Aircraft and engine noise propagates from a small space to miles in all directions, at varying frequencies, making proper modeling of the acoustical properties of sound waves a daunting challenge. Heretofore, governments required laboratory or flight tests to validate the acceptability of airplane configurations to satisfy community noise requirements. But exascale-powered M&S holds the promise that this can be accomplished by computer.[67] However, as Gablonsky explained:

To simulate noise requires much larger meshes (meshing refers to defining continuous geometric shapes [e.g., 3D models] using simplified 1D, 2D, and 3D shapes) than we currently use, and which must be run in a time accurate manner. Right now it takes weeks to get a few seconds of time data for simplified geometries, making it impractical for design. Exascale technologies will enable us to do these types of simulations efficiently, and in timeframes where we can incorporate design decisions earlier into the aircraft design cycle.[68]

HPC is also playing a key role in military aircraft design. In September 2020, the United States Air Force (USAF) introduced an “e”-series aircraft designation, with USAF secretary Barbara Barrett explaining that USAF created the nomenclature to “inspire companies to embrace the possibilities presented by digital engineering.” USAF also noted, “An eSeries digital acquisition programme will be a fully-connected, end-to-end virtual environment that will produce an almost perfect replica of what the physical weapon system will be.”[69] The first aircraft to receive the designation was the Boeing eT-7A Red Hawk, the service’s next-generation jet trainer, which was designed completely virtually using model-based engineering and 3D design tools. In other words, it’s the first aircraft to be fully designed, modeled, and tested using (super)computers.[70] USAF noted that digital engineering allowed Boeing to “seamlessly transform its schematic into a metal aircraft” with few time-consuming design errors, 80 percent fewer assembly hours were needed compared with a conventional development method, and the jet “moved from computer screen to first flight in just 36 months.”[71]

Lastly, the episode highlights one other dynamic about why HPC matters immensely to U.S. industrial competitiveness. In procurement, aircraft (and engine) manufacturers, in both the civilian and military domains, often guarantee a set of performance attributes for their customers four or five years before the first plane (or engine) ever even flies. HPC-powered computational tools are indispensable to ensuring accurate modeling with a high degree of confidence that the end product will reliably meet the customers’ requirements, at a profitable price point for the manufacturer. In other words, the effective application of HPC is integral to the competitiveness of America’s aerospace industry.

Jet Engine Design and Manufacturing

HPC is also playing an integral role in facilitating the next generation of jet engine design. CFM, a joint venture between General Electric (GE) and Safran S.A., are currently working to develop a next-generation, open-fan engine design via the Revolutionary Innovation for Sustainable Engines (RISE) jet engine program. (See figure 9.) RISE has a goal of achieving a 20 percent reduction in fuel burn along with a corresponding reduction in carbon emissions (near elimination being possible as hydrogen becomes a feasible fuel source), further building upon the 15 percent increase in fuel efficiency CFM achieved with its current-generation LEAP (Leading Edge Aviation Propulsion) engine.[72]

Figure 9: Evolution of GE/CFM jet engines[73]

Like Boeing, GE needs to master CFD, so it has partnered with national labs such as LLNL and ORNL to develop high-resolution turbulence models to help design RISE with improved aerodynamic performance, durability, and fuel efficiency. The goal is to accurately simulate air flows and their turbulence under realistic operating conditions for the engine. As Arthur explained:

When we started simulations years ago, we could only simulate airflows between one pair of blades of a turbine at a time, which was of limited use because many crucial machine dynamics encompass more than that small area. As we have scaled up over time, first to multiple blades, then to multiple rows of blades, and then to the multiple stages of the engine, to the full annulus [i.e., the entire circumference], we have surpassed the needed thresholds to gain insight into these dynamics and perhaps ultimately one day will be able to perform simulations of the entire engine.[74]

While GE possesses in-house HPC resources, conducting end-to-end, full annulus M&S of new engines will require massive computational power, in part because of the variety of flight conditions that must be accounted for—different atmospheric, altitude, and weather conditions; different flight and lift conditions (e.g., takeoff vs. cruise vs. landing), etc.—so GE has partnered with ORNL in the past to use its 200-petaflop Summit supercomputer, and going forward will run M&S on Frontier as well. Thus, HPC is instrumental in informing engine design to navigate the vast variety of design options and operating conditions and provide designers with the greatest chance of designing an optimal engine before one actually gets fabricated. Arthur noted, “One can’t test a jet engine [let alone a jet aircraft or wind turbine] in a wind tunnel, since those facilities are smaller than the actual product. So companies build a scale-miniaturized version of the product and a ‘rig test‘ is performed that provides initial performance data, although the product is not tested at scale until the flight test.”[75] And just like in the aircraft example, jet engine makers are selling new engines based on modeled performance specifications before the first production unit is ever manufactured. Here, Arthur has observed what a difference maker exascale can be: “To run the takeoff test simulation at the rig-test scale, that simulation would be about 70,000 node hours on Summit … that same simulation at product scale would be over 6 million node hours on Summit. So we love Frontier” because at exascale on Frontier vs. 200 petaflops on Summit, it will allow GE to run such simulations roughly five times faster, and potentially more thereof.[76] As GE pushes toward the next generation of hydrogen-powered, open-fan jet engines as envisioned in RISE, HPC will play an indispensable role.

As of November 2015, the United States fielded 199 of the world’s top 500 supercomputers compared with China’s 109; however, by June 2022, China had flipped the script, fielding 173 of the 500 fastest supercomputers to the United States’ 128.

And indeed, more fuel-efficient (and cleaner-burning) engines are a differentiator in the marketplace. As Hyperion Research’s Earl Joseph, Steve Conway, and Bob Sorensen have explained, each year, about $200 billion worth of fuel is consumed globally in GE’s gas turbine products, including aircraft engines and land-based gas turbines used for the production of electricity.[77] Every 1 percent reduction in fuel consumption therefore saves the users of these products $2 billion combined per year, and “any company that can achieve even 1% improvement in efficiency can potentially cause market disruption, as the resultant efficiencies would overtime [sic] add up to enormous cost savings to customers, thus providing market advantage.”[78]

Elsewhere, LIFT—one of America’s 16 Manufacturing USA Institutes focused on developing and deploying advanced lightweight materials manufacturing technologies—has leveraged HPC to develop advanced materials supporting the development of lighter and thus more energy-efficient jet engines. Specifically, LIFT has partnered with LLNL to “[e]valuate stress/strain behavior of aluminum and Al-Li alloy for different lithium content, shapes, and volumes” and “model validation of dislocation mobility, a property of alloys under stress.”[79] LIFT estimated that over 13 million gallons in jet fuel can be saved per year industry-wide by using Al-Li alloys.[80]

HPC Enabling Automotive Innovation and Mobility Solutions

As Automotive World’s Alyssa Altman wrote, “The future of the automotive industry relies on the ability to leverage HPC.”[81] Of course, HPC has long been used to design more fuel-efficient vehicles. For instance, South Carolina-based BMI Corp. has developed SmartTruck technology using supercomputer resources from ORNL that could save 1.5 billion gallons of diesel fuel and $5 billion in fuel costs per year.[82] As BMI CEO Mike Henderson explained, “We were able to run simulations based on the most complex tractor and trailer models instead of simplified models, and we were able to run them faster.”[83] Specifically, BMI used HPC to improve the aerodynamics of 18-wheel (Class 8) long-haul trucks, with the typical big rigs achieving fuel savings of between 7 and 12 percent.[84] Moreover, as in aerospace, access to HPC “shortened the computing turnaround time for BMI’s complex models from days to a few hours and eliminated the need for costly and time-consuming physical prototypes” allowing BMI to go from concept to a design that could be turned over to a manufacturer in 18 months instead of the 3.5 years it had originally anticipated.[85]

As auto manufacturers now look to design a new generation of connected and autonomous vehicles (CAVs), “HPC fosters the ability to render a model quickly, create prototypes remotely and design virtual crash tests.”[86] Indeed, developing and training CAVs and simulating more efficient traffic flows at scale requires the power and performance only HPC can provide.[87] As Altman wrote, “Without HPC, there is no way to accelerate the data-intensive process of the vehicles’ response systems.”[88] In other words, HPC will play a key role in helping design CAVs that reduce accidents caused by human error and help reduce congestion (by being able to communicate with other vehicles and transportation infrastructure). HPC will also play a role in facilitating the deployment of intelligent transportation systems more broadly. For instance, the University of Michigan has invested in a supercomputer that supports ML applications to enable researchers in its MCity program, which develops intelligent transportation systems, to perform more complex simulations and better train DL models to recognize signs, pedestrians, and hazards.[89]

HPC Enabling Consumer Packaged Goods Innovation

CPG companies such as Procter and Gamble (P&G) leverage supercomputing to understand formulations down to the molecular level across a wide range of products such as cosmetics, shampoos, soaps, and diapers, thereby improving product quality and performance, in part because HPC helps companies identify molecular characteristics not observable experimentally.[90] At P&G, HPC exerts significant impact on process design and optimization, material selection, product and package design, and supply chain optimization. As Alison Main, senior director of R&D at P&G, explained, HPC-powered M&S “is now the way we do work … [from] fragrance optimization and formula design to assessing fit and fluid absorption for feminine hygiene products.”[91] P&G maintains its own supercomputing hardware, but also partners with U.S. national labs such as LANL and LLNL when it requires additional computing power. As Main explained, “Over the last decade, HPC has helped P&G save over $1 billion through replacing physical experiments, optimizing equipment design, increasing production capacity, and qualifying more efficient materials.”[92] In one case, P&G’s use of simulation and modeling allowed it to reduce the number of steps involved in a process design by over 50 percent.[93]

HPC-powered M&S helps P&G design, formulate, and fabricate a range of products from fragrances and shampoos to hygiene products, while also helping P&G save over $1 billion in costs over the past decade.

One celebrated application of HPC-powered M&S at P&G was understanding the optimal way paper fibers should contact one another (e.g., in paper towels or toilet paper) so as to maximize papers’ texture, absorption, softness, and fit within packaging. P&G partnered with LLNL to develop a large, multiscale model of paper products that simulated thousands of fibers with a resolution to the micron scale, with a project goal of reducing the paper pulp in P&G products by up to 20 percent.[94] To do so, LLNL developed a parallel computing program called “p-fiber,” which could quickly prepare the fiber geometry and meshing input data needed to simulate thousands of fibers. In the model, each individual paper fiber was represented by as many as 3,000 “bricks” or finite elements and the model generated up to 20 million finite elements and modeled 15,000 paper fibers.[95] P&G partnered with LLNL on the project not just for the computational power of the labs’ supercomputers, but also for their speed. As LLNL researcher Will Elmer explained, “We found that you can save on design cycle time. Instead of having to wait almost a day (19 hours), you can do the mesh generation step in five minutes. You can then run through many different designs quicker.”[96] In total, LLNL was able to run design simulations up to 225 times faster than meshing the fibers sequentially on P&G’s computer.[97] The effort yielded important insights into the structure of fibers and papers, including into their texture, durability, and tearability.

Main further explained, “In addition to product design and downstream product and process validation, like drop testing and package conveying, P&G also uses HPC to build our foundational understanding of chemical and substrate behavior which informs our innovations of the future.”[98] Main noted that P&G is partnering with the Sandia National Laboratory to optimize its process to manufacture porous materials to guide design and reduce the energy required to make sustainable substrates for paper, feminine hygiene, and other absorbent products. P&G has also worked with LLNL to develop methods that leverage HPC to couple disparate time and length scales in molecular simulations.[99] These methods have enabled the creation of models of small molecules interacting with bacterial membranes to inform the development of new antimicrobial chemistries to enhance the performance of P&G’s antibacterial products.[100] As Main concluded, “Going forward, HPC will help P&G innovate with increasingly complex natural and recycled materials, bring new products to market faster, and enable us to explore more possibilities than we could dream of imagining with physical experimentation alone.”[101]

HPC Enabling U.S. Life Sciences Innovation

Exascale-era HPC promises to unleash a wide range of new biomedical discoveries and innovations and is already making tremendous contributions in oncology research and drug discovery. HPC also played a pivotal role in helping the global biomedical community tackle the COVID-19 pandemic. HPC has long been used in computational drug discovery and design wherein techniques such as molecular simulation can help model a biological target associated with a disease and identify drugs that might effectively bind to those targets, while also achieving a desired therapeutic outcome. But when the range of possible drug compounds is large, this process can take very long, with the costs of running many simulations high, slowing down the creation of life-saving drugs. HPC-enabled ML can complement this process by initially screening the known range of drug candidates to focus testing and simulation only on those with the right features to be successful, with a 2019 GAO study estimating ML can produce R&D costs savings of $300 million to $400 million per successful drug by accelerating drug discovery.[102] The following section examines how HPC has facilitated COVID-19 vaccine and therapeutic development, progressed oncology and Alzheimer’s drug research and innovation, and helped make gene sequencing possible.

COVID-19

During the COVID-19 pandemic, “nearly every public research supercomputer pivoted to some form of COVID research.”[103] For instance, weeks into the pandemic, an ORNL supercomputer, Summit, was tapped to run simulations of over 8,000 drug compounds to identify those most likely to prevent the virus from infecting host cells.[104] The ORNL team identified 77 compounds that represented promising candidates for testing by medical researchers. As Jeremy Smith, director of the University of Tennessee/ORNL Center for Biomolecular Physics and principal researcher for the study explained, “Summit was needed to rapidly get the simulation results we needed. It took us a day or two whereas it would have taken months on a normal computer.”[105] The overall U.S. effort to leverage HPC in the pandemic fight was spearheaded by the COVID-19 HPC consortium, a unique public-private partnership between government, industry, and academia that provided a single point of access to the nation’s HPC and cloud-computing systems as well as a review mechanism to evaluate research proposals and ensure the most-promising ones were prioritized given both the high volume of research proposals submitted and limited supercomputing capacity.[106]

The consortium quickly reviewed over 200 research proposals and got 114 up and running on the nation’s supercomputing infrastructure.[107] Some of the notable outcomes included the following highlights:

▪ Experimental testing of predicted compounds proposed by University of Tennessee researchers led to the discovery of several new inhibitors of viral proteins and the identification of three already-approved drug compounds that inhibit the infectivity of live coronavirus, with two of the identified compounds subsequently entering clinical trials.[108]

▪ Michigan State researchers used an IBM supercomputer to perform analysis and predictive modeling of potential SARS-CoV-2 mutations and their impact on diagnostic testing, vaccines, and therapeutics (leading to several journal publications).[109]

▪ Applying a new transcription-based drug-pair synergy approach, Mt. Sinai researchers used supercomputers to model 35 billion predictions to identify 10 drug pairs predicted to target the COVID-19 protein interactions.[110]

While scores more examples exist, suffice is to say that supercomputing represented an indispensable part of America’s COVID-19 response and undoubtedly contributed to one of the most rapid developments of vaccines and therapeutics in human history.

HPC and ML can work in tandem to identify promising molecular compounds to treat diseases, with one study finding the twain can produce R&D costs savings of $300 million to $400 million per successful drug by accelerating drug discovery.

For Pfizer, supercomputing proved instrumental in the development of both its COVID-19 vaccine as well as its COVID-19 therapeutic. As Lidia Fonseca, executive vice president and chief digital and technology officer for Pfizer, explained:

Supercomputing helped us to fast-track the progression from discovery to development for Paxlovid, our oral treatment. Using sophisticated computational modeling and simulation techniques, we can now test molecular compounds in a virtual rather than physical lab environment. In the case of Paxlovid, this enabled us to test a fraction of the millions of known compounds that might have worked to treat COVID-19 so that we could quickly narrow down to just those compounds that had the highest chance of becoming medicines.[111]

As Vassilios Pantazopoulos, head of scientific computing and HPC, at Pfizer elaborates, “HPC-powered, large-scale modeling and simulation can impact biomedical innovation all the way from the earliest-stage research through to clinical trials, and one reason exascale computing matters it that it can enable an exponential speed-up in the ability to tackle research problems that were heretofore intractable due to the extent of computational power needed to develop models (i.e., of molecules or proteins) of the scale and size required for them to be accurate and useful for research scientists.”[112] Pantazopoulos notes that Pfizer ran millions of simulations in its drug discovery efforts over the past year, the vast majority of which were focused on the development of COVID-19 vaccines and therapeutics. He notes the company employs a large number of computational scientists who leverage supercomputing capabilities to accelerate the drug discovery process on a daily basis.

Fonseca further elaborated on how supercomputing and advanced analytics helped facilitate development of the COVID-19 vaccine: “Many of the allergic reactions that clinical trial participants reported while testing our vaccine resulted from certain lipid nanoparticles in the vaccine itself. Using supercomputing, we ran molecular dynamics simulations to find the right combination of lipid nanoparticle properties that reduce allergic reactions, thereby creating as safe and effective a vaccine as possible.”[113]

In addition to simulating nanolipid particles to come up with the best set of properties to reduce allergic reactions, molecular simulations also proved useful in making the mRNA vaccine more resilient to temperature changes, which enhanced the storability, transportability, and accessibility of the vaccines. Beyond design of the vaccine, large-scale fluid dynamic simulations also made important contributions in helping Pfizer to optimize and scale-up its vaccine manufacturing process. While Pfizer’s development of COVID-19 vaccines and therapeutics offers just one salient and compelling example, HPC-powered M&S plays a role in supporting the development of many of the nearly 100 other innovative drugs Pfizer is developing in its pipeline.[114]

Cancer Research and Detection

From private enterprises to universities to U.S. government agencies such as DOE and the National Institutes of Health (NIH), HPC is facilitating cancer research and drug discovery. For instance, DOE and NIH’s National Cancer Institute (NCI) have launched a joint effort to develop new therapies and improve the ability to detect cancers at an earlier stage.[115] The DOE-NCI collaboration seeks to bring the power of HPC to bear on three specific areas of cancer research:

1. Cellular-level: advance the capabilities of patient-derived pre-clinical models to identify new treatments

2. Molecular-level: further understand the basic biology of undruggable targets

3. Population-level: gain critical insights on the drivers of population-level cancer outcomes.[116]

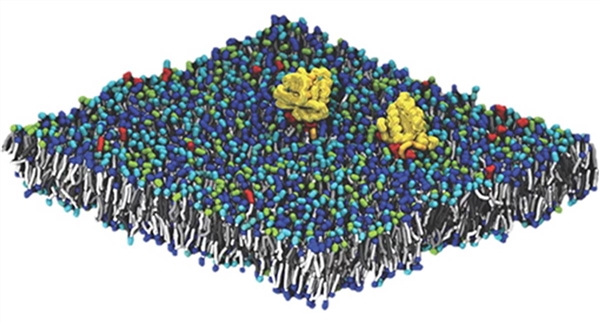

Their collaboration particularly targets one biomolecular protein family—RAS genes—mutations in which cause an estimated 30 percent of cancers and are particularly prevalent in those of the lung, colon, and pancreas. While RAS mutations have been studied for decades, no RAS inhibitors exist, in large part because scientists have lacked a detailed molecular-level understanding of how RAS genes engage and activate proximal signaling proteins. (See figure 10.) That’s in part because “RAS signaling takes place at and is dependent on cellular membranes, a complex cellular environment” which is hard to model using conventional techniques.[117]

Figure 10: Simulation capturing the molecular details of RAS genes in complex lipid membranes[118]

HPC-powered ML applications are now being deployed to generate multiscale physical simulations to provide a more-realistic view of RAS cancer biology, providing a nice example of the modeling complexity at play and the value of HPC.[119] As Bhattacharya et al. described in “AI Meets Exascale Computing: Advancing Cancer Research With Large-Scale HPC”:

The principal challenge in modeling this system is the diverse length and timescales involved. Lipid membranes evolve over a macroscopic scale (micrometers and milliseconds (ms)). Capturing this evolution is critical, as changes in lipid concentration define the local environment in which RAS operates. The RAS protein itself, however, binds over time and length scales which are microscopic (nanometers and microseconds). In order to elucidate the behavior of RAS proteins in the context of a realistic membrane, our modeling effort must span the multiple orders of magnitude between microscopic and macroscopic behavior.[120]

Leveraging supercomputing assets from DOE and the National Nuclear Security Administration (NNSA) and working with experimentalists at the Frederick National Laboratory, the research team developed a macroscopic model that captures the evolution of the lipid environment and is consistent with an optimized microscopic model that captures protein-protein and protein-lipid interactions at the molecular scale.[121] The researchers were able to simulate at the macroscopic level a 1 x 1μm (a micrometer, or one-millionth a meter), 14-lipid membrane with 300 RAS proteins, generating over 100,000 microscopic simulations capturing over 200 ms of protein behavior. As the report notes, “This unprecedented achievement represents an almost two orders of magnitude improvement on the previously state of the art.”[122] But as the researchers noted, with exascale machines “we can substantially increase the dimensionality of the input space and its coverage,” and going forward they will use supercomputers “to include fully atomistic resolution, creating a three-level (macro/micro/atomistic) multiscale model” and “incorporate membrane curvature into the dynamics of the membrane.”[123]

HPC and AI are also working in concert to facilitate real-time cancer surveillance in the United States. NCI started the Surveillance, Epidemiology, and End Results (SEER) in 1973 to collect and publish cancer incidence and survival data from population-based cancer registries (covering 35 percent of the U.S. population) with the goal of facilitating data-driven discovery to understand drivers of cancer outcomes in the real world.[124] Using AI-driven natural language processing (NLP) tools running on HPC, researchers have been able to accurately classify all five key cancer data elements—cancer site, laterality, behavior, histology, and grade—for 42.5 percent of cancer cases.[125] It’s a significant step toward the goal of achieving real-time cancer surveillance and facilitating data-driven M&S of patient-specific health trajectories to support precision oncology research at the population level.

Alzheimer’s Disease

Mental and neurological disorders and diseases cost the U.S. economy more than $1.5 trillion per year—as much as 8.8 percent of U.S. GDP.[126] The financial impact of Alzheimer’s disease alone is expected to soar to $1 trillion per year by 2050, with much of the cost borne by the federal government, according to the Alzheimer’s Association report “Changing the Trajectory of Alzheimer’s Disease.”[127] However, the United States could save $220 billion within the first five years and a projected $367 billion in the year 2050 alone if a cure or effective treatment for Alzheimer’s disease were found.

Supercomputing is stepping in to help meet the challenge. Researchers at the San Diego, California-based Salk Institute are using supercomputers to investigate how synapses in the brain work, their research focusing on the actions that occur when neurons send chemical messages along synapse pathways to other neurons. Essentially, they’re studying the release of neurotransmitters, their diffusion across synapses, and how they bind to receptors. Using supercomputers, researchers achieved a 150-fold speedup of simulations of ever-increasing complexity.[128]

Elsewhere, in 2021, UCLA and Johns Hopkins University scientists released research that examined 47,000 images of human brains to study brains’ thinning during the early stages of Alzheimer's and how it impacts mild cognitive impairment. As Daniel Tward, an assistant professor of computational medicine and neurology at UCLA, explained, “Until now, we haven’t been able to measure these changes in living people. By using supercomputers like Comet at the San Diego Supercomputer Center at UC San Diego and Stampede2 at Texas Advanced Supercomputing Center, we were able to study a large cohort of patient images over time.”[129] The researchers used supercomputers to observe and quantify thinning in the transentorhinal cortex, which is located in the temporal lobe of the brain and is believed to be the first area impacted by Alzheimer’s disease (although until now this could not be diagnosed until autopsy results were available). The research could represent a critical breakthrough to provide early diagnosis of Alzheimer’s. As Tward noted, using supercomputers “[reduced] computation time from months to days [and] allowed this complex neuroimaging project to be feasible.”[130]

Genome Sequencing

The role of supercomputing in unlocking the secrets of the human genome has been profound. In 2010, an epochal case unfolded involving Nicholas Volker, a four-year old boy suffering from a mysterious, unknown illness that repeatedly attacked his intestines and proved untreatable with existing approaches to known ailments such as Chron’s disease (a type of inflammatory bowel disease).[131] In a near last-ditch effort to save Nicholas’s life, doctors sequenced his DNA in the hopes of identifying the heretofore unknown genetic mutation, making Nicholas one of the first humans to have his genome sequenced for the express purpose of identifying a disease and one of the earliest examples of personalized genomic medicine. (Only 1 percent of Nicholas’s genome was actually sequenced, at a cost of $75,000, as doctors focused on exons, the part of each gene that contains the recipe for making proteins. Sequencing his whole genome would have cost $2 million at the time and taken months.) The DNA sequencing identified 16,142 variations, sections in which Nicholas’s pattern of DNA base pairs differed from the norm.[132] With the help of supercomputers and a novel software tool, doctors honed in on eight leading (gene mutation) suspects, “and examined them in detail by searching medical literature and gene functions.”[133] Doctors finally narrowed down the culprit to a mutation of the gene XIAP, found on the X chromosome, whose role is to block a process that makes cells die and helps prevent the immune system from attacking the intestines. The rest of humanity has the sequence thymine-guanine-thymine, which produces the amino acid cysteine, but in Nicholas’s case, the single base-pair mutation led to thymine-adenine-thymine, which created a wholly different amino acid, tyrosine, which precluded the protein that Nicholas’s XIAP should have created from performing its job of protecting the intestines from immune-system attack. Armed with this knowledge, doctors successfully treated Nicholas with high-dose chemotherapy and an umbilical cord blood infusion.[134] It represented one of the first cases in history where advanced computational tools such as HPC and gene sequencing were used to help unearth a heretofore unknown disease and identify an effective treatment.

As Hans Hofmann, director of the Center for Computational Biology and Bioinformatics at the University of Texas, summarized, “In the life sciences, none of the recent technological advances would have been possible without supercomputers.”[135] He noted that sequencing the human genome in the first instance took eight years, thousands of researchers, and about $1 billion; today, researchers can sequence a person’s entire genetic code, about three billion base pairs, for $600 in a matter of hours (with the $100 genome not far behind).[136] And while, just like an AI-based voice recognition system operating on one’s smartphone doesn’t need a supercomputer to operate it, no one’s genes can be sequenced cheaply today without the need of a supercomputers, this doesn’t discount the instrumental role HPC has played in developing the algorithms and knowledge base to achieve this reality.

Clean Energy Innovation

Exascale-era HPC will empower advancements in numerous areas of clean-energy innovation, from the optimization of both wind turbine and wind farm design and construction to management of smart electric grids.

Wind Energy

The ExaWind initiative seeks to leverage HPC technologies in support of the ambition of having renewable, inexhaustible wind energy resources account for as much as 20 percent of U.S. energy needs over the next 10 years.[137] But achieving wide-scale wind energy deployment will depend upon understanding, predicting, and reducing plant-level energy losses from a variety of physical flow phenomena and therefore “requires the ability to predict the fundamental flow physics and coupled structural dynamics that govern whole wind plant performance, including wake formation, complex-terrain impacts, and turbine-turbine interactions through wakes.”[138] In other words, it will require understanding how to design the most energy-producing wind turbines—even those capable, such as a plant reacting to the movement of the sun, of adapting in real time to changes in wind speed and direction—as well as how to design entire wind farms in the most effective manner (i.e., such that the wake disturbance from turbines in one wind farm doesn’t undermine the energy-producing power of others). That’s a significant challenge, because downstream wake effects can spread over an area of multiple kilometers, and decrease the efficiency of a downstream wind turbine by up to 40 percent, while increasing the “out-of-plane” load (meaning the external pressure exerted on turbine blades) by up to 40 percent as well.[139] Success of the ExaWind initiative will require development of an “M&S capability that resolves turbine geometry and uses adequate grid resolution (down to the micrometre scale) … [while also modeling the effect of] atmospheric turbulent eddies and generation of near-blade vorticity and propagation and breakdown of this vorticity, within the turbine wake” to a significant distance downstream.[140]

Wake effects from a wind turbine can decrease the efficiency of downstream turbines by as much as 40 percent, meaning HPC can play a pivotal role in informing not just the design of wind turbine blades but also the positioning of wind turbines, and indeed entire wind farms, relative to one another.

To this end, GE is partnering with ORNL to leverage Summit’s supercomputer-driven simulations to improve efficiencies in offshore wind energy production.[141] As GE research aerodynamics engineer Jing Li explained, “The Summit supercomputer will allow GE’s team to run computations that would otherwise be impossible … [thus] supporting research [that] could dramatically accelerate offshore wind power.”[142] The GE-ORNL collaboration focuses in particular on “study[ing] coastal low-level jets, which produce a distinct wind velocity profile of potential importance to the design and operation of future wind turbines.”[143] As Li explained, “We’re now able to study wind patterns that span hundreds of meters in height across tens of kilometers of territory down to the resolution of airflow over individual turbine blades … [which allows us] to understand poorly understood phenomena like coastal low-level jets in ways previously not possible.”[144]